US GETS A MULLIGAN IN CARBON MITIGATION

September 21, 2012 § 1 Comment

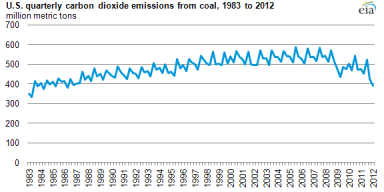

Used as we are to being accused of being extravagant users of energy, the recent report from the Energy Information Administration (EIA) is an eyebrow raiser. It states that the US carbon emissions from energy use dropped to levels not seen since twenty years ago. Before you pop the Champagne (and thus adding CO2 to the air!), these statistics are just for the first quarter of 2012. And in examining the principal factors one is forced to conclude that the country has, in golf terms, received a mulligan (thank you Tim Profeta at Duke for that characterization).

The accompanying analysis by the EIA is sparse. They ascribe three reasons. One is the warm winter and associated reduction in use of heating fuels. But these are quarter to like quarter comparisons. We have had warm winters before in the last decade. The other two reasons are more interesting from the standpoint of a go forward national policy.

Cheap shale gas driven a switch from coal to natural gas in the generation of electricity is clearly a big factor. For the oldest coal plants it was more cost effective to switch to gas rather than to retrofit pollution control equipment. To the extent this startling carbon mitigation statistic has been discussed at all, the principal attribution has been to this factor. Studies making the direct quantitative connection are not in evidence. But, the underlying assumptions regarding the near halving of CO2 from substitution of gas for coal are not disputed. Activism by Sierra Club and others continues to shut down coal plants. One could therefore reasonably expect continuation of this trend towards gas substituting for coal. New wind generation capacity can also be expected, but not as fast.

The third reason cited by the EIA is reduced consumption, largely attributed to the recessionary conditions. The concurrent high gasoline prices likely were a factor. Whatever the reasons, gasoline consumption has plummeted in the last nine months, from about 9.1 million barrels a day in June, 2011 to 8.5 in April, 2012. An improving economy ought to moderate this drop rate even if oil prices remain high.

Looking out to the next two decades, we have the federal target for a drastic improvement in the fuel efficiency of vehicles: 45.4 miles per gallon by 2025. New vehicles today average 24 mpg. This near doubling in fuel efficiency ought to have direct effect on emissions per mile driven.

But there is always Jevons’ Paradox to worry about. Jevons was an economist a century and half ago who predicted that when devices become more efficient people simply use them more, thus offsetting the efficiency improvement. Modern economists term this the rebound effect. In the automobile example, this could entail per capita miles driven to go up because the consumer would note the reduced cost per mile. To the extent that this happens, the positive effect of increased efficiency on CO2 emissions would be dampened. In a recent discussion the economist Richard Newell at Duke, who until recently headed the aforementioned EIA, opined that the rebound effect would be relatively small in this case. For most people work related miles are a principal component. This is not going to change just because the cost goes down.

But fuel economy standards apply only to new vehicles. So they will take a while to become a major factor. Earlier gains would be made through fuel substitution with less polluting alternatives. The simplest ones are natural gas vehicles for public transport and light duty vehicles. Another straightforward thrust would be substitution of up to 25% diesel with di-methyl ether (DME) without engine modifications. DME from cheap natural gas would cost much less than diesel, has zero particulate emissions and a very high cetane rating. Particulates (with associated health effects) are a less publicized target for mitigation. The deployment of DME in the diesel infrastructure could be almost immediate in the case of captive businesses such as fleets for vehicles, and compressors and pumps for all manner of industrial endeavor, including fracking for gas and oil.

While this country can take some satisfaction from the carbon mitigation statistic we cannot rest on those laurels. We may indeed have been granted a mulligan by the coordinated effects of shale gas related coal substitution and a weak economy using less energy. A tax on emissions, implicit or explicit, is not on the cards. We need policy that is industry and consumer friendly and yet effective in reducing emissions. Natural gas substitution of coal and increased proportion of renewable energy will likely continue. We need a national policy and associated research that makes deep inroads into substitution of oil based transportation fuel with less polluting domestic alternatives.

Vikram Rao

HIGH OCTANE

September 8, 2012 § 3 Comments

The words high octane conjure up the vision of powerful, almost inexorable. Scribes in the press refer to a high octane economy. As it turns out, in the transportation world, high octane is not necessarily better. But it could be.

The octane number is a characteristic that defines the ability of a fuel to tolerate high compression engines. In such an engine the fuel is compressed in the cylinder to a greater extent than in regular engines. Consequently, when ignited, the energy released is greater than in the conventional cylinder. This provides a high torque to the wheels. More importantly to the fuel economics and the environment, more energy is produced for the same amount of fuel. In simple terms, the efficiency of the engine is increased.

The octane number of the fuel determines whether this can be accomplished. If the octane number is too low, the fuel will ignite prematurely. This is known as “knocking” in the parlance because of the sound produced, a bit like a rattle. Each car engine manual defines the octane rating permitted. Regular engines use 87 octane fuel and most others use 91 octane. Some sporty cars require 93 octane. This is why gas stations carry three grades. Inexplicably, though, the mid grade is 89 octane, not 91. No car is rated for that value. Ultra-high compression engines such as Indy race cars require octane numbers over 100. That is why they use pure ethanol or methanol, both with octane numbers over 110.

But for a given car, higher octane is not necessarily better. The 93 octane grade often carries the descriptor “super” or “hi-test”. This can be deceiving. A car designed to use 87 octane gasoline will derive no benefit from the higher grade. This is a case of more not being better.

But more can be better if we change the engine. While this may seem an impractical suggestion, consider first an important fact. Each of the three most viable substitutes for oil derived transportation fuel has an extremely high octane number. Ethanol, methanol and methane (the principal constituent of natural gas) clock in at 113, 117 and 125, respectively. Comparing just the liquids in that list, regular gasoline scores an anemic 87.

But ethanol has 33% less energy content and methanol has about 45% less. This is why E85, which contains 85% ethanol, has never been popular with consumers. Flex fuel vehicles (FFV’s) tolerate any mixture ranging from pure gasoline to E85. But without subsidies E85 costs as much or more than gasoline, especially in drought years such as this. And it delivers 28% fewer miles to the gallon. Not a formula for consumer acceptance.

Methanol is much cheaper to produce than ethanol, especially with cheap shale gas. So even taking into consideration the lower energy content an M85 blend would be good value. But one would have to fill up about twice as often than with gasoline.

The principal disadvantage of methane (natural gas) as a fuel is the low volumetric density. Compressed natural gas (CNG) occupies four times the volume as gasoline. Ongoing research at RTI and elsewhere targets halving that disadvantage.

An elegant solution would be a super-high compression engine, with compression ratio around 16. Regular engines are just under 9. At these high compressions, all three gasoline substitutes would deliver very high efficiencies. One could expect the energy density disadvantage to be completely eliminated. Methanol in particular would be significantly cheaper than gasoline per mile driven. At present low cost natural gas would be the raw material of choice. But biomass sourced methanol will be in our future. Policy measures to ensure this ought to include federally funded research to reduce the cost.

The President recently set a goal of 50% reduction in imported oil by 2020. A significant component of that will be more shale oil from the Bakken and similar deposits. We are already producing 10% of our requirements from there, up from essentially zero a scant five years ago. But a big factor ought to be displacement of oil by alternatives. The three discussed above can play big parts. They would have starring roles if we standardized on FFV’s with high compression engines.

In one fell swoop we would also take a major stride towards the goal of 54.5 miles per gallon for passenger cars by 2025. This ambitious goal is likely not realizable without a major technical advance such as efficient engines running on high octane fuels. Finally, such engines are relatively simple to design and produce. Detroit ought not to balk.

Vikram Rao

See announcement of web cast by Vikram Rao and Ann Korin

WHY STORE BOUGHT TOMATOES TASTE LIKE CARDBOARD

August 9, 2012 § 4 Comments

Well so OK, not quite cardboard, that distinction belonging to All Bran cereal. But you know what I mean. Beautiful, red, evenly round but bland. Conduct a taste test of one such against one from your closest farmer’s market. In particular, pick a variety like Cherokee Purple, which certainly has not gone through hybridization hell recently.

Now there is a paper from Ann Powell at the University of California, Davis, arguably the Mecca of agricultural research (sorry N C State), and collaborators at Cornell and elsewhere. The original paper, in the journal Science (June 29, 2012 issue), is tough going for non scientists, but reasonably approachable. I am directing you to a popular story describing the paper, which is eminently readable and has the relevant facts.

The essence of the work is that the industry devised the tomato for uniform ripening behavior. They found a mutation in the 1920’s that caused this trait and simply selected for it. This allowed the farmer to harvest evenly ripened fruit for transport to market. The fruit also ripened to an even red color. They are uniformly light green. Your garden tomatoes will have variations in color from beginning to end, shades of light and dark green to start. The aforementioned Cherokee Purple is particularly so, and the flesh is a gorgeous purple. Of course if you prefer plain vanilla red (is that oxymoronic or what?), this is not the one for you. Go for German Johnson or Brandywine (shown in the image). Names with character, in contrast to Big Boy, Early Girl; not picking on Burpee, but hey, I did not name them, they did.

But the genetic manipulation to obtain this trait inadvertently suppressed sugar. The finally ripened fruit was then compromised on taste and flavor. What made this discovery possible was identification of the gene responsible for the uniform ripening. The investigators then were able to locate the position on the chromosome and thence deduce that the sugar producing protein was absent.

This research was uniquely possible because of an international effort to sequence the tomato genome. Took them nine years and success was announced earlier this year. The tomato genome? Seriously? Apparently this was driven in part because the tomato belongs to a family of other vegetables. Oddly enough it has 92 percent of genes in common with the potato. The potato decided to go in the direction of starch in tubers. Tomatoes opted for the above ground show of sugar and color. Until we messed with nature, that is. Others such as eggplant are country cousins.

So is the tomato a fruit or a vegetable? Botanists see it as a fruit. Most of us are likely divided. Although what we just divulged here regarding sugar content might change the vote of some. But the Supreme Court has decided this for us. And you thought they only worried about hanging chads and curbing Arizonian sheriffs. Back in 1893 the Nix family disputed import duties on tomatoes from the West Indies on the argument that tomatoes were a fruit, not a vegetable. They lost. So now it is law.

While humans have 23 chromosomes, tomatoes have only 12. Interestingly, though they have about 32000 genes to our 24000. Some believe the additional complexity is because they have to defend against the environment while remaining static. We can escape on feet.

So, the future likely holds tasty tomatoes that do not sacrifice shelf life. If genetically modified, this ought to be straightforward. If they try to go about it through conventional breeding techniques, could take longer. In India, a genetically modified version of a vegetable staple the eggplant was developed for better yields and disease resistance. A vociferous minority essentially squelched that. In the US almost all our corn and soy bean are genetically modified but most are not aware. If a labeling push goes through as law, opinion could change. Meanwhile, I grow my own Cherokee Purple and Sun Gold.

Vikram Rao

PUNCTURES IN HELIUM BALLOON

July 17, 2012 § Leave a comment

The discovery of the Higgs Boson particle announced on July 4 in Geneva was a science event of moment. For those still in the dark on this matter (by the way this is a poor astrophysics joke) this discovery essentially proved the Standard Model of elementary particles and the forces they exert on each other. The Economist devoted the front cover to it in a recent issue. And none of this would have been possible without helium. And we may be running out of it.

Helium is the second most abundant element in the universe, after hydrogen. But terrestrially it is in relatively short supply, at least in usable concentrations. But first a word or two on what it does for us.

Helium is known as a noble gas because it is relatively inert, that is it does not react easily with other species. Use in welding relies on this property. But most know it for being lighter than air, leading to party balloons and blimps. The most famous blimp in history, the Hindenburg, was lost because of the flammability of that other light gas, hydrogen. Helium when inhaled constricts the vocal chords and one sounds like Alvin the Chipmunk. A somewhat more esoteric property is the ability to stay a liquid down to a temperature of nearly Absolute Zero, which is defined as – 273 C. That may seem to be just a number, but it is the number at which all molecules essentially stop moving. That is pretty daunting. Fortunately, it is believed that this temperature cannot be achieved. But liquid helium brings one within whispering distance.

Extreme cold is need for certain magnets to be effective, especially in the medical diagnostic application of Magnetic Resonance Imaging, commonly known as MRI. Effectiveness of magnets is the critical property needed to make the Large Hadron Collider effective. This particle accelerator at CERN in Geneva is the facility that discovered the Higgs Boson.

So, it is critical for important devices and industrial practices and is considered to be a strategic commodity. And yet, it is cheap enough to be used in party balloons. This oddity is due to the fact that the US government decided in 1960 to create a strategic reserve and then bleed it out for use. This sale is then made at a relatively low cost, today at about $75 per thousand cubic feet (mcf) for raw helium; processed gas costs more. 75% of the helium supply in the world is from the US, and half of that is from the reserve. Consequently the world price is determined by the US government release from Cliffside. This is the name of the storage facility near Amarillo, Texas.

To make things even odder, Congress decided in 1996 to get the feds out of the helium business by 2015, seemingly to encourage privatization of the business. Investors did not get the memo. We are in 2012 now; nearly 35% of the world’s supply comes from Cliffside. Drawing it down to zero by 2015 sounds Draconian even for the current less-than-functional Congress. A bill to extend the deadline has been wallowing around for a while. They inherited the problem for sure, but are likely too busy with other matters such as dealing with Justice Roberts’ epiphany.

So, where does the helium come from presently and what is possible? All the US sourced helium is from the Hugoton natural gas field and from one other in Wyoming. The content is up to 1.9%, which is anomalously high relative to other deposits. At these concentrations it pays to separate it out. The hot area of shale gas is not a promising source. The small helium atom tends to escape in the reservoir and so one would expect the concentrations to be very low. In fact, unfortunately, helium is generally found associated with high nitrogen containing natural gas. This usually renders it uneconomical.

An interesting source would be a bi-product of liquefied natural gas (LNG) production. When the gas is chilled to -162 C to make the methane liquid, the remaining vapor is rich in helium even if the original gas has very little of the stuff. This is in fact happening in Qatar despite the helium being less than 0.1%. Qatar is now the next highest producer to the US. One could expect the same in Australia, with massive LNG facilities, and possibly even in the soon to be permitted Cheniere Energy plant in Louisiana, although for that one the concentration could still be challenging if shale gas is the raw material source. But if LNG becomes popular for long haul transport and the gas source is chosen carefully, helium could be a bi-product.

Suffice to say that if something is not done to improve helium supply much of MRI could be rendered MRIP: magnets resting in peace.

Vikram Rao

“COLOR BLIND” VOTE MORTIFYING BUT NOT MATERIAL

July 12, 2012 § Leave a comment

When Becky Carney, a Democrat legislator accidentally hit the green voting button rather than the red, it made national news. I am dubbing this the Color Blind Vote on account that the ability to confuse red with green is a trait of those with this affliction. Ms. Carney does not take refuge behind this condition. She attributes it to mental and physical fatigue. We have all been there; she just happened to be on the national stage.

In the estimation of one national commentator, this one had the biggest “ouch factor” in the political gaffes of the week. It easily lapped the one by Bobby (aka Piyush) Jindal for his slip in use of the words “Obamney care”. Romney’s VP list just got shorter by one, one imagines. Freud may have been hard at work on that one. Bobby does advocate government actions to reduce the uninsured pool, but only when he does it (at the state level).

Ms. Carney’s was the casting vote that overrode Governor Perdue’s veto of the Senate bill S820 to permit fracking in North Carolina. In her explanation the governor is reported to have stated general support for shale gas production. But she felt that the bill as written did not have sufficient environmental safeguards. So she vetoed it. It was overridden by the deciding Color Blind Vote.

Whilst undoubtedly mortifying, the vote in question will likely not matter very much. In saying this I am not being prescient with regard to the color of the party of the governor elected in November. My reasoning is based on the facts and logic related below.

In view of the shale gas related economic boom in Pennsylvania, and soon to be in West Virginia and Ohio, our state has a right to understand the extent of the resource. Armed with this information it could choose to permit production or not. In my view, as stated in previous blog postings and my recent book, such activity ought to be permitted only if accompanied by strict measures to assure stewardship of the environment and accommodation of the interests of the local communities. Pennsylvania suffered the pain of going out in front while seemingly not quite prepared. We can avoid that fate. The Pennsylvania governor’s Marcellus Shale Advisory Commission report is comprehensive and a good place to start.

The problem is that we really do not know the extent or quality of the resource. Sure the US Geological Survey finally released their report. Their assessment is an average of 1.66 trillion cubic feet of natural and 83 million barrels of associated natural gas liquids (NGL’s).

While the gas numbers are unexcitingly low, the NGL figure may be more troubling. I recalculated the above figure to be able to compare it to other areas. I get about 2 gallons per thousand cubic feet (mcf). My research has shown that the current producing areas range from 4 to 12 gallons per mcf. I have used a number of 7 gallons for Marcellus (PA, OH, WVA) calculations in the book. The key point is this. With the historic low prices for natural gas, most of the profit is in the NGL’s. This is because those components are largely priced pegged to the price of oil. Oil today runs over five times the price of natural gas on the basis of energy content. So, unless our figures get revised upwards from new data, exploitation prospects are bleak until natural gas prices pick up due to demand creation.

The natural gas figure is a good deal lower than was expected by state geologists. I have little doubt that this was due to the paucity of the data. Sparse data inevitably causes confidence limits to be lower, and that drives down the figures. So, the only means by which the state could really know much how much gas it has is by encouraging more data to be collected.

Now, here is the rub. By now the whole energy world knows that horizontal drilling and fracking are essential for economic production. If these are forbidden by law, nobody will invest in data gathering. What would be the point?

Consequently, allowing horizontal drilling and fracking to be lawful is necessary to know where we stand. I am not aware of any state in the union where they are outlawed, except Vermont recently making fracking unlawful. The Vermont action is amusing. This is akin to Texas outlawing tapping of maple trees for energy conservation reasons (up to 50 gallons of water must be boiled off to produce one gallon of syrup).

Shale gas is not being produced in most of the states where fracking is lawful. In the end exploitation decisions will be made by business people assessing the true profitability of the production, including the factoring in of the costs of environmental prudence.

Vikram Rao

Some of these ideas appeared in an op ed piece in the N&O on July 11, 2012

IDLE CONJECTURE

June 20, 2012 § Leave a comment

A recent news story in the News and Observer relates an automobile accident at the RDU airport. The driver is reported to have left his car idling, and left the vehicle to pick up an incoming passenger, secure in the belief that the car was in a stationary state. One presumes he thought that it was set to the “park” setting on the gear box. Well, apparently it was not and it started to move. Noting this he jumped in and inadvertently hit the accelerator rather than the brake and hit someone, injuring her.

Our point, and I realize you the reader were desperately attempting to discern this, is not what he did, but what he did not do. He did not switch off the engine. This is more common than is not. Who has not seen the line of cars picking up kids at the YMCA or some such establishment, each one of them idling away (of course with a hybrid such as the Prius that is not the case simply because that decision is taken out of their hands; stationary hybrid engines stop by design)? To study the phenomenon, we shall first normalize for weather and assume that keeping the air conditioning on was not the driver. Even without actual data I think we can safely assume that this happens in all manner of weather.

Otherwise environmentally conscious people do this, so what is going on? Getting past sheer laziness or carelessness, most are likely still steeped in an old dogma: starting an engine uses an inordinate amount of energy and it is better to keep it running. There used to be an old rule of thumb of thirty seconds as a breakeven time. If you took a poll of otherwise highly educated friends regarding the breakeven time you are likely to encounter a range of numbers ranging up to a minute. Even with young people who became drivers well after the era of carburetors, the dogma is entrenched, or they have simply not thought about it.

In the 70’s and 80’s cars were switched from carburetors to fuel injection. The former bled in the air for combustion by suction, while the latter pressurized the mixture precisely and in fine droplets that were injected. This allowed for a leaner fuel mix and the cooling effect in the cylinder helped improve the effective octane rating slightly. Electronically timed injection happened in the early 90’s. So if your car is younger than twenty years, it has this feature.

The significance is that there is virtually no penalty for stop and start. To test for the breakeven time experiments were conducted by engineers of the Florida chapter of the American Society of Mechanical Engineers on V6 powered vehicles. They concluded that 5 seconds of idling equated to the fuel required to start the engine (6 if the air conditioner was on). Does this mean we should stop the engine at red lights? Probably not. The time to take an automatic out of park and start the engine is several seconds. Also, even if one did do this flawlessly, the car was likely not designed for such frequent restarts and something would wear out. The fuel economy part is correct but something else may give. In hybrids the start is with an electric motor, which is designed for the purpose and highly reliable.

But there is no debate when it comes to idling while waiting to pick someone up. When picking up kids it could also be a teaching moment. As a bonus, the young staff herding the kids will also lead better respiratory lives as a result.

Vikram Rao

GREENER CARS

June 19, 2012 § 1 Comment

Other than the imagery of British Sporting Green (a fine color but currently not in vogue even on a diminutive Austin Healey), to most people this title evokes electric cars, fuel efficiency and hybrids. The June 18, 2012 issue of the Wall Street Journal had two pieces that prompted this post. One was on innovation in the internal combustion engine and the other on the promise of natural gas fueled cars and the hurdles therein. These were unconnected, but easily could have been, so we will take this opportunity to do so.

The article America, Start Your Natural-Gas Engines deals primarily with the potential cost advantage of displacing gasoline with cheap natural gas, with the associated gains on tailpipe emissions. Such a car would certainly be considered greener than one running on gasoline. But they correctly point out that compressed natural gas (CNG) tank will have four times the volume of a gasoline tank and weigh more due to the thick walls necessitated by the need for pressures in excess of 3000 psi.

Shifting gears now to the other article New Twists on an Old Idea which describes several new variants of the internal combustion engine designed to make them more efficient. The efficiency improvements claimed range from 15% to 30%, and some have reductions in weight. But all are radical departures from orthodoxy, a tough pill to swallow for an industry in which capital costs are very high.

Assuming that much of the driver for engine innovation is to reduce gasoline consumption and associated emissions, this could be achieved by using CNG as the fuel, the precise subject of the other article. The CNG could partially or completely replace the gasoline, or diesel for that matter, and the emissions would also be reduced. The article discusses two initiatives to improve the volumetric efficiency. Not mentioned are extremely promising ongoing research in Texas A&M University, Northwestern University and the Lawrence Berkeley Laboratories, to name just three. These three are pursuing the class of compounds known as Metal-Organic Frameworks or MOF’s, which adsorb large quantities of methane and store at conventional pressures. These are still at the laboratory scale and build upon research in inexpensive hydrogen storage. The target would be to double the volumetric efficiency of CNG in a low pressure tank. Another promising avenue being pursued is the use of a graphene variant for the same purpose. Some of these are poised for scale up so we are not talking blue sky research.

Engine Innovation: Engine innovation ought to move out of the old paradigm of improving the gasoline fueled engine. Gasoline has an inherent disadvantage with respect to cleaner alternatives such as methane, methanol and ethanol. That disadvantage is octane rating. All the three cleaner fuels have octane ratings in excess of 110 compared to 87 for regular gasoline. Higher octane rating allows the fuel to be more compressed prior to being fired. This produces more energy per unit of fuel combusted. That is the definition of efficiency. So, a simple target of more efficient engines is an engine with a high compression ratio rather than complicated radical departures such as in the Journal piece. Of course, those inventors were forced in that direction because of the aforementioned infirmity of gasoline.

So, how outlandish is the idea of ultra high compression engines (ratios in excess of 15 compared to about 8.5 for a conventional V8)? Indy race cars have compression ratios near 17 and run on methanol or ethanol. Diesel engines have compression ratios in the vicinity of 16. The investigation ought to encompass both pure natural gas use and mixtures with gasoline or diesel. In the case of the latter, the host engine is already a high compression engine, albeit not spark ignited. A high ratio of natural gas may require spark ignition. But all of this can be worked out. The key point is that innovation in engines more fruitfully ought to target utilizing the high octane rating of natural gas rather than improving the performance of that octane challenged and polluting fuel, gasoline.

If the Wall Street Journal could indulge in a do-over, the companion article to the one on natural gas vehicles ought to be one on engine innovations enabling the new, clean, domestically produced, fuel.

Vikram Rao

Distributed Energy: Shades of Green

June 18, 2012 § 4 Comments

To many, distributed energy conjures up the vision of small scale solar power and wind generated electricity, and by inference a green feeling. The reality is that the some of the ubiquitous forms of distributed energy are decidedly not green in part because the primary reason for it has been to augment shortcomings of power on the grid and expediency has dictated behavior.

The current system of electricity generation and distribution is largely an artifact of the way we engineer things. A large plant is more economical per unit of energy produced. This is known as “economies of scale”. In the early part of the twentieth century central plants were rated at 60 megawatts. By 1970 the majority of plants were rated over 1 gigawatt (1000 megawatts) and this was largely driven by nuclear plants, which were designed for the large scale, likely because the control systems cost the same to build and man, large or small. Large plants dictated that we have transmission lines covering significant distances, incurring losses. In the US the losses average about 8%, but in some developing nations these could be up to 40%. The majority of this large loss is theft. There are also over a billion people not served by electricity at all. Ought they to be served in the conventional way with the associated losses? Or should we engineer distributed generation uniquely tailored to each environment?

Grid Parity: When new systems are conceived, their economic viability is judged by their ability to achieve “grid parity”. This is roughly defined as being on a par with the average cost of delivered electricity in that area. In most communities in developed nations this figure is in the single digits, or low double digit cents per kilowatt hour. Examples of the higher figures are New York and San Francisco, with the rank outsider the state of Hawaii weighing in at over 25 cents. The use of grid parity gives alternative power sources a clear hurdle to cross. But therein lies an inherent fallacy: averages ought not to dominate the discussion. An energy source providing power between 12 noon and 6 pm ought to be compared in price to the marginal cost of conventional electricity in that period. In many cases that is several times the average. Solar power can be expected to produce in that time period and ought to be accorded a commensurate advantage. By the same token, wind tends to blow at night on land, so would produce electricity during a low price period. In some areas nighttime power has practically zero value. However, if stored and delivered during peak hours, the value would be higher. This further underlines the need for storage as an important companion to wind energy.

But what is the meaning of grid parity for people not served by the grid, including the billion people mentioned above? Many of these places derive their power from diesel, and in some instances, kerosene. The high cost of the fuel would allow a number of alternative sources to achieve cost parity. In some of these communities, as in parts of India, the government often subsidizes the fuel price, which could have the unintended consequence of disadvantaging alternatives.

Shades of Green: Despite the outstanding examples of distributed power from wind and solar, distributed energy does not necessarily equate to clean generation. In fact the vast majority of backup power supplies are powered by diesel. In countries with uncertain power and frequent brown outs businesses employ diesel generators almost exclusively. Whilst diesel is an energy dense fuel and appropriate for the purpose, the health impact of emissions make it decidedly not green. Recent research posits it to be worse than second hand smoke as a cause of lung cancer. So, displacement of diesel power can be expected to be both cost effective and green.

Combined heat and power is an outstanding example of distributed energy. When power is produced a great deal of energy is lost in the form of thermal energy. Nuclear plants use considerable water to cool down the system and the steam is simply released. The most effective use of thermal energy is to use the steam and low grade heat in buildings and businesses. Since steam and heat cannot be transported effectively, power plants employing this energy conserving approach are by necessity located near users out in the community. The green aspect derives from the more efficient use of the fuel combusted: such plants typically have double the efficiency of conventional power plants. The carbon dioxide released is now halved per unit of energy produced. Incidentally the same argument applies to electric cars, which are 60% more efficient than conventional cars when compared on equivalent terms. The zero emissions at the tailpipe are replaced by emissions at the power plant, but the system efficiency reduces the emissions per mile driven. Electric vehicle naysayers are fond of saying that they are only as clean as the power running them. Not exactly.

Vikram Rao

METHANOL IS PRO CHOICE

April 27, 2012 § 8 Comments

Methanol is pro life as well, the good life. Lower cost driving that is relatively immune to gasoline price run ups and lower emissions to boot, now that is living well. Add to that the fact that in the production of methanol we have choice of starting raw material. Unlike ethanol, that other alcohol, methanol is easily derived from a host of carbonaceous materials other than natural gas. So it is not subject to price volatility, as for example was ethanol, to the vagaries of corn prices.

As we have discussed previously, flex fuel vehicles capable of running any mixture of either alcohol or gasoline would afford consumers choice. The E85 experience was not all positive because ethanol prices without subsidies were relatively high in the US. Brazil, with its low cost sugar cane derived ethanol is a different story. Now with the disappearance of the import tariff on ethanol, perhaps Brazilian ethanol will be viable here. But ethanol from biomass still suffers economic hurdles. So, the choice of feedstock in this country is pretty much limited to corn. Other crops such as sweet sorghum have not yet become viable.

Methanol on the other hand is very simply produced from natural gas, coal, petroleum coke or woody biomass, pretty much in order of ascending cost to manufacture.

This chart was derived by RTI engineers for a typical methanol plant producing 5000 tonne/day. Plants double or triple that size are also not uncommon. Costs at such plants would be comparable. At April 2012 prices methanol could be produced for about 30 cents per gallon. Add to that typical distribution costs and taxes of 30 cents. Then double that for gasoline equivalence because methanol has half the energy content of gasoline. We come up with methanol costing roughly $1.20 at the pump on a gasoline equivalent basis. Compare that to regular gasoline today at about $3.80 per gallon. Do keep in mind, though, that with today’s low compression car engines the methanol tank would be roughly twice the size of a gasoline tank, or you would simply have to refuel more often. This would be a part of the consumer trade off. As reported in a previous blog posting, high compression engines could eliminate that penalty.

But natural gas pricing today is abnormally low in large measure due to the warm winter. A more normal price would be the October 2010 price shown in the figure. That would put methanol at the pump at about $1.60 per gallon, still a steep discount to gasoline. Looking out further, we forecast the ceiling for natural gas pricing as shown. This has support from the study by Amy Jaffe and colleagues at the Baker Institute in Houston, who used different methods. At the upper end of the range we could expect methanol at the pump to be $2.30 per gallon. The likelihood of gasoline dropping to those levels in the foreseeable future is extremely remote and episodic at best. All forecasts of oil prices are well into three digits and not the sub $70 per barrel they would have to be to get gasoline to break even with methanol. We are forced to conclude that methanol will be cheaper than gasoline on a miles driven basis for decades.

That leaves the question of whether natural gas supply will keep up with the demand. The concern is valid to some degree because of the expected massive displacement of coal as a fuel for electricity production. Add to that the expectation of rapid expansion in other chemical industry segments such as fertilizer production. Finally, export of liquefied natural gas (LNG) will certainly be in play. LNG adds between $3 and $4 to the cost of a million BTU, the position in that range depending upon how far is the delivery point. Even with that mark up, gas at prices shown in the graph will be profitable for delivery to countries such as Japan and India, who are currently paying over $12 per million BTU. Such export is being resisted by the US chemical industry because of worries of price escalation, so it may well not happen. Said industry is enjoying a windfall at current gas prices. Anhydrous ammonia sells for between $600 and $800 a tonne. The raw material cost of that at April 2012 natural gas prices is under $60. That is the definition of profit.

Methanol from coal can be expected to cost about the same as natural gas at the upper end of the range in the figure. For this computation we take $25 per tonne for the low grade coal at the mine mouth. High grade coal at $150 per tonne would be out of the question and unnecessary. Petroleum coke is a bi-product of heavy oil processing and has very low value. But it is very high in carbon and extremely low in ash. It can, however, have high sulfur and heavy metals. These last are manageable. One could expect methanol from petroleum coke to be just a bit higher than from low grade coal. All of these are still very competitive with gasoline. Finally there is biomass. The lignin content of some woody biomass is particularly deleterious for ethanol production. But thermal processes used to make methanol are not bothered by that aspect. The costs would be higher than for the other sources discussed but further research could bring that down.

In summary, methanol offers consumers of transport fuel a viable low cost choice. Producers will have choice with respect to raw materials, with natural gas currently being the low cost winner. But in the future, a significant portion could be from poorly utilized resources such as low grade coals and petroleum coke. Finally, renewable sources such as woody biomass could be made economically viable. The only loser in this scenario will be imported oil. If tempted to drink to that, make sure it is ethanol.

Vikram Rao

The Road to Energy Independence

April 4, 2012 § 3 Comments

Responsible production of shale gas will essentially eliminate import of natural gas. That leaves the big ticket item oil. Here too, the notion of independence can usefully be bifurcated into first independence from distant and unreliable sources. First step to that could be to target the oil passing through the Straits of Hormuz. Iranian saber rattling today concerns that flow.

The EIA forecasts that in 2022 we will import 7 MM bpd, down from the 8.1 MM bpd in 2011. I think that if pipelines are built from N Dakota, Bakken oil will eat into this number more than already forecast. But sticking with their figure, first subtract Canada and Mexico. Canada can be expected to ramp up their current flow of 2.2 MM bpd to at least 3.0 MM bpd. We have a special relationship with the Canadians: the bulk of their oil is refined in the US even if some of it is upgraded in country. Aside from the high carbon footprint of this oil, this is a desirable and secure relationship. There is a fair amount of trade in natural gas as well. Mexico currently supplies 0.8 MM bpd. This is at considerable risk of decrease because of the decline in the Cantrell field, but we will leave it at that figure for 2020. This too is heavy oil suited to our refineries.

Of the 3.2 MM bpd balance, we estimate about 1.7 MM bpd passing through the Straits. So, one strategy would be to target oil alternatives to that level. Ignoring for the present the fact that a barrel of oil does not generate a full barrel of transport fuel, we can target 1.7 MM bpd of oil replacement. A rough calculation of all sources indicates this is viable, as enumerated below.

- Sassol, the South African leader in GTL has already announced construction of a GTL plant in Louisiana reportedly rated at 90000 bpd of fuel. Assume at least one other such, bringing the total close to 200000 bpd from GTL emboldened by low gas prices.

- Alaska offers three distinct opportunities for supplying the Lower 48. One is an abundance of heavy oil near infrastructure in Prudhoe Bay. It suffers from high viscosity and cannot be sent down the pipeline. But it could be blended with two different light oils. One is shale oil, much as is in the Bakken and Eagle Ford. One small company Great Bear Petroleum is reportedly collaborating with Halliburton to deliver this fluid. The other source of diluting fluid is liquid from natural gas. Prudhoe Bay has at least 35 trillion cu ft of recoverable gas that has no market. This “stranded” gas has low value and can inexpensively be converted to liquids using well known methods. The Trans Alaska Pipeline System is currently pumping just over 500000 bpd. When it drops much below this the entire economics of transport are at risk. So there is an imperative to feed more into that pipeline. The measure noted above should accomplish that as well as supply at least an additional 200000 bpd to the lower 48.

- Long haul trucks switching to LNG or methanol could reasonably target 20% of current fuel usage, which accounts for 0.5 MM bpd of oil.

- Methanol, ethanol, CNG, biofuel and electric cars could target 1.0 MM bpd. A significant part of this, and relatively straightforward, would be CNG displacement of diesel in metropolitan public and commercial transport. For passenger vehicles methanol appears to be the most advantaged on a cost basis.

An angle other than a Straits strategy is a study of the marginal domestic barrel and what it replaces. New domestic oil production is all light sweet oil. This is most like the oil from Saudi Arabia and Nigeria. So that may make sense as the first to be displaced. The Saudi portion is, of course, Straits related and currently stands at about 1 MM bpd. Similarly, the uptick in Canadian oil that we predict will displace heavy crude such as from Venezuela, which currently weighs in at about 0.65 MM bpd. The main point is that crude quality is variable and refineries are choosy, so country strategies have to recognize this.

Shale gas produced responsibly will be a key enabler for methanol to be produced at prices attractive with respect to gasoline. Broad scale availability of FFV’s and associated fueling infrastructure will give the public choice. Tomorrow that choice could include other alcohols or methane and a suggested high performance FFV will enable that. Today methanol appears to be particularly advantaged. Ultimately, gasoline (and diesel) can be rendered just another option, not a requirement. And even that portion could be from domestic production.