Being Barry

May 10, 2026 § Leave a comment

Barry hung up his earthly bicycle clips a few days ago. He will be remembered for many things, but mostly for being Barry. By some measures he was the quintessential Aussie. A farmer in the pre-Amazon days, who got by with what was available and with a skill acquired in the school of hard knocks. Failure was a luxury ill afforded. The impossible simply took longer. This frontier mentality was not unique to Barry, but it was manifest at an exceptional level. Not having been at his side over the years, I have but a few examples. I suspect they will suffice.

Lest Barry come across as some sort of paragon, we will begin with one of his foibles. Barry was a believer in the circular economy before it came into vogue. The starkest manifestation was that Barry never saw any roadside trash that he did not see as useful. That it came in handy when he needed to walk on water (all will be revealed shortly) is just the sort of encouragement that he did not need. Would that our foibles were all this harmless.

Barry is testimony to the fact that inventiveness is not the purview of only the highly educated. As with many farmers, including his parents, Barry went to work on the farm prior to finishing high school. When a portion of the citrus orchard needed new planting, the saplings were widely spaced to account for their girth when they would be fully grown. Watering was still done with overhead “monsoons”. He decided to grow vegetables in the medians in part to put the water falling on the medians to use; shrewd but not innovative. Carrots were chosen for market demand and minimal spoilage. The soft texture of raised beds favored the root vegetable’s growth condition. But what nailed it was his innovation to eschew a single wide raised bed. He decided that parallel narrow beds afforded the carrot roots less compaction by being close to edges. Many bountiful harvests rewarded this thinking.

A defining trait was his love of bicycling. Even well into his eighties, a 17 km ride each way to town to pick up the newspaper was a mere bagatelle. An 80 km ride celebrated that milestone birthday. When he mentioned to us last year that they had joined the Meals on Wheels program, we expressed surprise that the program delivered out to their place. It did not. He biked in with an insulated pack to fetch the food. Meals on wheels, yes, but his wheels. Of course.

Now to the walking on water mentioned earlier. You were no doubt thinking of this figuratively. Even though the country is fondly known as Oz, wizardry is not expected. However, hark back to that “the impossible simply takes longer” ethos. The town embraced that with a “walk on water” competition at an annual event. There were rules regarding use of only commonplace materials, cost limits, and the like. The devices were to be affixed to the feet and a traverse on top of the water was required for several meters. Even Barry had suffered the wet fate of others in prior years. But one year fortune handed him litter, which when combined with assorted objects in his collection, produced the triumphant traverse. Indomitable comes to mind. And yet, he was the gentlest of souls, thinking well of people as the default option even against mounting evidence. His glass was always half full.

He was just being Barry.

Barry G. H. Goddard 1937 – 2026

Vikram Rao

Hot Rocks Heat Up

April 29, 2026 § Leave a comment

Enhanced Geothermal energy is all grown-up now. Fervo Energy announced an IPO on April 17, within months of a Series E fund raise. Some expect the valuation to be nearly double that in the Series E, although the company is more cautious in the announcement. While this is significant indication of investor interest, and the timing is right because of the expected burgeoning demand from data centers, the evidence for geothermal coming of age is in the facts they disclose. (Disclosure/disclaimer: I advised them in the early years, but all data opined upon are in the public domain).

First some basics. To date geothermal energy at commercial scale has been a niche play, relegated to the volcanic Pacific “Ring of Fire” and areas with continental plate movements (Iceland and the East African Rift Valley). In addition to hot rocks at reasonable depths, this means requires a water source below, the constancy of which has been a constraint. In the industry this type of geothermal is referred to as hydrothermal. Enhanced geothermal is the term used when water is pumped down artificially and returning hot water is used to produce electricity or simply used as a heat source. In this piece, all references to “geothermal” are to the enhanced variety.

When I use the term “grown-up”, I mean scalable to meaningful levels. In making that assessment, I am extrapolating beyond the facts and figures in the Fervo announcement and presenting the logic for the extrapolation. I will lead with the conclusion that this is a watershed event. Geothermal will scale and become the primary source of firm carbon-free power.

The promise of geothermal has been there for several years, as it has been for small modular reactors (SMRs), and long duration storage concepts, all designed to complement wind and solar, which are low-cost, but have temporal gaps leading to low capacity factors1. Definitive proof of scalability and delivered cost was needed for geothermal to take its place as a double-digit percentage of the world power supply. And carbon-free to boot.

Fervo just delivered that, but not with the IPO announcement, and not with the promise of electricity dispatch commencing late this year on the 500 MW Cape Station project in Utah and not projects in the pipeline to deliver over 3 GW, and not even the lease positions that could generate 42 GW. The turning point was the announcement of the results from Project Red. OK, so you need to be a bit geeky to fully discern the importance of the results from this project, but here goes for a crack at the broad brushes, as deciphered from their public disclosures.

Project Red comprised a well doublet (two substantially parallel wells, one injector, one producer) dispatching commercial quantities of power. The wells were heavily instrumented and there was an additional “observation” well. The most important result from the project was that the model predictions were realized and in so doing de-risked scale up both in operational feasibility and unit economics. A key element of this result was the thermal stability of what I refer to as the heat reservoir (as opposed to oil and gas reservoirs). For 500 days of operation, the reservoir temperature remained steady and in the additional 100 days, dropped a nominal amount. This is something geothermal watchers such as I had worried about, the balance between reservoir heat maintenance and output. Project Red had deliberately short laterals. Adding lateral length was certain to deliver more, with the same thermal stability. That is the design of the future, which almost certainly was implemented in Cape Station, with multiple well pairs, and with tweaks, including reduced diagnostic instrumentation.

A word on the economics of production. Expect cost per unit output to come down significantly with scale. The modeling is sophisticated, but the unit operations are commonplace in the oil and gas sector. In shale oil and gas, when multiple wells were drilled on “pads”, cost plummeted with concepts such as rigs on rails, batch drilling and the use of AI for efficiency in operations. Every reason to believe this will apply to geothermal operations. Also, in an odd twist of fate, the workers employed in a declining oil market (gas will have longer legs and will even grow for some years) will simply move over to geothermal operations. No new training, no deficit in supply.

But geothermal needs to be ready for the crew change. Now it looks like it might be.

Vikram Rao

April 29, 2026

1https://www.rti.org/rti-press-publication/carbon-free-power, p. 63.

Why the Iran War Has Raised Gasoline Prices

April 4, 2026 § 7 Comments

The United States is a net exporter of oil and gasoline. In fact, it is the largest exporter of gasoline in the world. Yet, the Iran war, which resulted in the blockade of the Strait of Hormuz, an important oil transit waterway, has caused shortages of oil in net importing nations. Why, then, has it caused oil (and gasoline) prices to rise steeply in the US, a net exporter?

Before answering that question, here is another seeming anomaly. The United States is a net exporter of natural gas, mostly in the form of liquefied natural gas (LNG). The Iran war, and the associated Strait of Hormuz blockade, has caused LNG shortages in net importing nations, causing a dramatic rise in price. Yet the price of natural gas in the United States has scarcely moved at all.

The answer is a bit nuanced but begins with the fact that oil is fungible. This means that similar grades of oil can be replaced by different sources. This makes oil a world commodity in terms of price. When the price goes up in a net importing nation, so does it in the US.

The US situation is further complicated by the fact that, prior to about 2010, we were net importers of oil. The cheapest oil available was difficult-to-refine heavy oil, and it was right next door, in Canada, Mexico and to lesser degree, Venezuela. American refineries were built to handle this stuff using expensive processing equipment. When abundant shale oil made the US technically self-sufficient, refineries were faced with the dilemma of buying more expensive domestic oil and idling the expensive kit made for heavy oil. This dilemma has been solved by importing heavy oil and at the same time exporting even more light oil, making us a net exporter.

Now to the price of gasoline. When war related gasoline prices rocketed up in importing nations, the US suppliers benefited from this. But importantly, the international (export) price now applied equally to the same fuel sold domestically. Ergo, a net exporter of gasoline, still had high domestic prices.

That did not happen with natural gas. Very simply put, gas is not fungible. It is not exported in the same form that it is sold domestically. The export is in liquid form, with a price tag determined by world prices. Natural gas in gaseous form is a regional commodity. It has a supply and demand balance that is unaffected by foreign wars. A minor exception is parts of the upper east coast which use LNG. Aside from the high likelihood of their paying world prices, the 1920 Jones Act restricts their supply to US built, owned, operated and crewed vessels, adding another constraint to a heavily utilized fleet.

So, there you have it. Domestic natural gas is insulated from the perturbations in supply caused by foreign wars, but oil and derivatives such as gasoline are not. I have been asked whether there is a remedy. In a free market, not really. In theory, some insulation could be provided by producing methanol from low-cost natural gas and then processing that to gasoline using a process invented decades ago by Mobil. In practice, it is not going to happen.

Just rattle sabers but keep them sheathed*.

*Know when to hold them, know when to fold them, from The Gambler 1978, performed and written by Kenny Rogers.

Vikram Rao

April 4, 2026

Can Batteries Pivot from EVs to Grid Support?

February 21, 2026 § Leave a comment

The February 12 print edition of the NY Times had a piece under the Climate Forward banner on A2. It observes that policy actions have led to a decrease in demand for electric vehicles (EVs), and that at least two auto manufacturers are pivoting to repurposing battery manufacturing to supply the market for storage in support of two areas with relatively robust demand. These are electricity grids with intermittency in renewable power and data centers.

First, the premise. Certainly, policy measures by the government, especially the repeal of the tax credit, have reduced the consumer demand for EVs in the US. Equally, grids continue to add renewable capacity, in part due to demand and in part due to solar electricity today being the lowest-cost form of energy. However, due to low capacity factors, and temporal fluctuations, this source requires storage as backup. The most ubiquitous storage means are batteries.

The newest entrants into the power demand sweepstakes are data centers, as noted in the NYT piece. The owners of these power hogs prefer electricity that is substantially carbon free. Microsoft went so far as to enable de-mothballing of the Three Mile Island conventional nuclear plant and guaranteeing offtake at a heavy premium to prevailing prices in the region. Expect also more “behind the meter” capability, meaning not acquired from the grid. This business model is a net benefit to consumers in the region because the cost will not be passed on to them.

The pivot to repurpose EV batteries to grid support applications is informed by the fact that EV batteries have two distinct cathode chemistries, and one is more suited to the stationary application. For the same storage capacity, the Nickel Manganese Cobalt (NMC) variant is lighter, but more costly than the Lithium Iron Phosphate (LFP). In the EV application the lightness often trumps the cost element primarily because longer range can be achieved with manageable addition to weight. Longer range and fast charging have emerged as dominating features sought by customers, especially in the higher end sedan and SUV lines. This has resulted in the vast majority of passenger EVs being powered by NMC batteries. In fact, to my knowledge, the only passenger EV using LFP batteries is the Tesla Model 3 with rear-wheel drive. This is a vehicle that targets the lower cost and limited range market segment. Pickup trucks are more suited to LFPs because they can generally tolerate the extra weight. The standard range Ford 150 Lightning uses LFPs and the extended range one uses NMCs.

To recap, defining characteristics of batteries in EV applications are high energy density and light weight, allowing for greater range before requiring recharging. By contrast, grid support batteries are not too bothered by these characteristics, because space is not at a premium, and the extra weight is tolerated in exchange for lower prices. But they prioritize the feature of longer life, defined by surviving more numerous charge/discharge cycles. This is because grid support requires batteries to be recharged much more frequently than in the motive application.

LFP batteries were invented by Prof. Goodenough at the University of Texas, Austin. He shared the Nobel Prize with two others in 2019 for lithium-based batteries as a class. While invented in the mid-1990s, LFPs were initially shunned by EV manufacturers. The defining characteristic of an LFP is the longer life. These batteries last for over 4 times as many charge/discharge cycles as NMC equivalents. All rechargeable battery lives benefit from not fully charging or discharging, and NMC batteries, including the ones in cell phones, last longer if charged just to the 80% level. LFP batteries are something of an exception in that a full charge to 100% has minimal impact on life. Another in the plus column for LFPs. A further plus is that LFP batteries are also believed to be safer.

The use of iron in place of more scarce imported cobalt, manganese and nickel is preferable from a resilience standpoint. Compared to the other metals, the price of iron is greatly lower and more stable. Cobalt has been particularly volatile, ranging from USD 22,000 to 94,000 per metric ton over the last eight years. Importantly, much of the volatility on the upside has been attributed to EV demand.

Now to the pivot. All EV batteries could, in principle, be used in the grid support market. But as noted above, LFPs are more suited, and their preferential use will likely stabilize the price of cobalt, thus benefiting the NMC market. Further, manufacturers of the LFP variant will have cost and desirability advantages in serving the grid market and, to a degree, in competing with Chinese imports. A NYTimes story on February 16 notes that the F15 Lightning battery plant in Tennessee is being shut down but may re-start to serve storage. They can manufacture both types but would be well served to make just LFPs. Absent tariffs, NMC batteries will have a tough time competing with imported LFPs.

In conclusion, the pivot makes more sense for the LFP* than for the NMC EV battery variant.

* The slow one now, will later be fast, from The Times They are a Changin’ (1964), written and performed by Bob Dylan

Vikram Rao

February 21, 2026

The Venezuelan Oil Story is Murky

February 14, 2026 § Leave a comment

After the spectacular Maduro related events of the last few weeks, Venezuelan oil has received considerable attention in the press. Even accounting for lack of domain knowledge in the press corps (and the industry “experts”), the tens of columns I have perused miss some key points. Setting the record straight here.

The missing bits are the quality and price, especially the latter. But first, confirmation of the fact that the Venezuelan oil reserves are the largest in the world. Prior to around 2007, before the previous dictator taking charge, the country produced 3.5 million barrels per day (M bpd). That was then, as now, the principal source of revenue for the country. The national oil company, PDVSA, was highly regarded, as was their research arm, INTEVEP. That reputation is in the past. PDVSA is a shadow of its formal self, as is INTEVEP. Some of these scientific refugees are in Canada. Oil production is below 1 M bpd, much of it destined for China. Very little of it comes to the US. Distance is a factor, as is the comfortable (most of the time) arrangement with Canada, and to a degree Mexico, both suppliers of roughly the same quality oil as could be from Venezuela. Interestingly, just the specter of increased supply from Venezuela caused a drop in the price of Canadian oil recently. This is knee jerk. Most Canadian oil is preferable. More on that below.

Venezuelan oil is heavy. Literally. A higher specific gravity than the benchmark WTI or Brent oil. Oil is a mixture of molecules of different lengths. Heavy oil has a higher proportion of long molecules. This makes it more viscous and higher in specific gravity. Longer molecules must be shortened for the workhorse market commodities gasoline, aviation fuel and diesel. This process is known as fluid catalytic cracking and is expensive. Therefore, heavy oil has a market price lower than the benchmark WTI or Brent, by between 12 and 25%. This is one of the points not made in all those columns in the press. The WTI or Brent price of oil is mentioned, but while relevant, that is not the whole story.

So, yes, there is a lot of it in Venezuela. But it is valued lower than lighter oil and the market is limited to specialized refineries. And it gets worse than that. Whereas heavy oil is produced in Canada and Mexico (and California as well, for that matter), Venezuelan oil is distinguished, and not in a good way, by being exceptionally high in the metals Vanadium and Nickel. These act as “poisons” for the catalysts in the cracking process. Again, this can be handled, but at a cost. One is the ROSE process, which can both remove these elements as well as capture the value in them. But it is not widely practiced. Yet, were the metals to be removed, they would constitute excellent sources of the relatively valuable element Vanadium, now desired for, among other applications, “flow batteries” for renewable power backup.

Finally, there is the question of whether the US needs Venezuelan oil. We have the odd situation in having many refineries geared to refine heavy oil, and who would have much lower profit margins were they to use a higher proportion of relatively expensive domestic (light) oil. So, the country benefits from importing heavy oil, while at the same time exporting domestic light oil. In fact, we have been a significant net exporter of light oil and refined products since 2020.

So, where does that leave Venezuelan oil? In the useful, but not essential category provided Canada and Mexico can supply the need, which they seem to be able to. Abundant shale oil changed everything. It made the US a net exporter. But the pre-shale oil years caused the buildup of complex refineries to handle heavy oil. As a practical matter, that makes heavy oil import economically necessary. And profitable. The basic buy low, sell high mantra.

Vikram Rao

February 14, 2026

LNG’s Tax Break Controversy

August 5, 2025 § Leave a comment

A story is breaking that Cheniere Energy is applying retroactively for an alternative fuel tax credit for using “boil off” natural gas for propulsion of their liquefied natural gas (LNG) tankers in the period 2018 to 2024, the year that tax break expired. The story leans towards discrediting the merits of the application. Before we get into that, first some basics.

LNG is natural gas in the liquid state. In this state it occupies a volume 600 times smaller than does free gas, thus making it more amenable to ocean transport. It achieves this state by being cooled down to – 162 degrees C. Importantly, it is kept cool not by conventional refrigeration, but by using the latent heat of evaporation of small quantities of the liquid. If the resultant gas is released to the atmosphere, it is a greenhouse gas 80 times more potent than CO2 over 20 years. In recent years, tanker engines have been repurposed to burn natural gas. The “boil off” gas, as it is referred to, is captured, stored and used for motive power. As with most involuntary methane release situations, capture has the dual value of economic use and environmental benefit. In the case of LNG tanker vessels, the burn-off can handle most of both legs of the voyage. Short haul LNG trucks also have boil off gas, and it is unlikely that the expense of recovery and dual fuel engines is incurred.

Also, by way of background, Cheniere Energy is a pioneer in LNG. It began with their construction of import terminals in the 2008 timeframe. Shortly after that US shale gas hit its stride and LNG imports evaporated. This was followed by US shale gas becoming a viable source of LNG export, and Cheniere again took the lead in pivoting to convert import terminals to export capability. Today they are the leading US exporters.

Now to the merits of considering boil off natural gas as an alternative fuel. The original intent of the law, which expired in 2024, appears to have been to encourage substitution of fuel such as diesel with a cleaner burning alternative. However, the letter of the law limits this to surface vehicles and motorboats. LNG vessels are powered by steam (using a fossil fuel or natural gas), and more lately by dual fuel engines using boil off gas and a liquid fuel ranging from fuel oil to diesel. Using a higher proportion of boil off gas certainly is environmentally favorable, mostly because sulfur compounds will essentially not be present and particulate matter will be vastly lower than with diesel or fuel oil. If this gas was not used for power, hypothetically, it would be flared, leading to CO2 emissions and possibly some unburnt hydrocarbons. Capture and reuse provide an economic benefit, so should it qualify as an alternative fuel?

An analog in oil and gas operations could be instructive. Shale oil can be expected to have associated natural gas because light oil tends to do that because of the mechanism of formation of these molecules. Heavy oil, for example, could be expected to have virtually no associated gas. When oil wells are in relatively small pockets and/or in remote locations and because of the relatively small volumes or remoteness, export pipelines are not economic. This gas is flared on location. Worldwide 150 billion cubic meters was flared in 2024, an all-time high. Companies such as M2X Energy capture this and convert it to useful fuel such as methanol, and in the case of M2X the process equipment is mobile. The methanol thus produced could be considered green because emissions of CO2 and unburnt alkanes would be eliminated.

Were that to be the case, the use of boil off gas has some legs in consideration of it being an alternative fuel. However, the key difference in the analogy is that in the case of the LNG vessel, an economic ready use exists. Not so in the remotely located flared gas. But is an economic ready use a bar for consideration? Take the example of CNG or LNG replacing diesel in trucks. They likely quality for the credit. The tax break approval may well come down to a hair split on the definition of motorboat. An LNG vessel is certainly a boat, and has a motor, but does not neatly classify as a motorboat in the parlance*. But if the sense of the law is met, ought the letter of the law prevail?

Vikram Rao

*That which we call a rose, by any other name would smell as sweet, Juliet in Romeo and Juliet, Act II, Scene II (1597), written by W. Shakespeare.

The Frac’ing Dividend

June 29, 2025 § Leave a comment

Few will dispute the fact that the US was a net importer of natural gas in 2007. Cheniere Energy was gearing up to import liquefied natural gas (LNG) and deliver it to the country. Then natural gas from shale deposits became economic and scalable. How Cheniere reinvented their business model by pivoting to convert their re-gas terminals to become LNG producers is a story for another time. As is the tale of shale gas singlehandedly lifting the US out of recession. Low-cost energy is a tide that lifts all boats of economic prosperity, and this one sure did.

The story for today is that the technologies that enabled shale gas production are showing up as key enablers for geothermal energy, the leading carbon- free energy source which can operate 24/7/365, unlike solar and wind, which are intermittent, with capacity factors (roughly defined as the portion of time spent delivering electricity) of up to 25% and 40%, respectively. The short duration intermittency (frequently defined as under 10 hours) is covered by batteries. But for longer periods, the most promising gap fillers are geothermal energy, small modular (nuclear) reactors and innovative storage means. Of these, the furthest along are geothermal, as represented by advanced geothermal systems (AGS), some battery systems, and hydrogen from electrolysis of water using power when not needed by the grid.

The most commercially advanced AGS variant is that of Fervo Energy. It employs two parallel horizontal wells intended to be in fluid communication. Hydraulic pressure is used to create and propagate fractures from the injector well towards the induced fractures at the producer well. Fluid is pumped into the injector well and flows through the fracture network into the producer well. Along the way, it heats up while traversing hot rock. This hot fluid is used to generate electricity. It may also be used for district or industrial heating. The heat in the rock is replenished by heat transfer from near the center of the earth, where it is created by the decay of radioactive substances. The center of the earth is at temperatures close to that of the sun.

The two key enabling technologies for accomplishing the process described above are those of horizontal drilling (and drilling a pair reasonably parallel to each other) and hydraulic fracturing, known in industrial parlance as “frac’ing”. Clever modeling (from a co-founder’s PhD thesis at Stanford) dictates many operational parameters such as optimal well separation, but the guts of the operation is that employed in shale oil and gas production. With one difference. The temperatures are greater than in most conventional shale operations. This affects the drilling portion more than the fracturing one. Temperatures will be greater than 180 C in AGS operations, and in excess of 350 C in the so-called closed loop systems (which have no fracturing involved, except for one minor variant). Even in AGS systems, higher temperatures are preferred, and Fervo recently demonstrated operations at 250 C. While this stresses industry capability, it still falls firmly in oil and gas competency and the geothermal industry can rely upon this rather than attempt to invent in that space.

The frac’ing dividend mentioned in the title of this piece refers to advances in frac’ing operations which accrue directly to the benefit of AGS operations. A key one is the ability to use “slick water”, which is fracturing fluid with little to no chemicals. Opponents of shale gas operations have often cited the possibility of surface release of these chemicals as a concern. Similarly, accidental surface release of hydrocarbons in fracturing fluid has been a concern but is irrelevant here because of the complete absence of hydrocarbons in the rock being drilled. AGS operations do have the possibility of induced seismicity. This is where the pressure wave from the hydraulic pressure potentially energizes an active fault, if present in proximity, and the resulting slip (movement between rock segments) causes a sound wave in the seismic range. However, the risk is small, especially if the activity is in a naturally fractured zone, in which fractures can be propagated at lower induced pressures than in unfractured rock. In any event, competent operators such as Fervo are placing observation wells and to date induced seismicity has not been a concern. The sound heard is that of (relative) silence*.

Finally, the shale oil and gas industry devised economies of scale by placing multiple wells on each “pad”. These techniques, including elements such as the rigs moving swiftly on rails, are directly applicable to AGS systems using frac’ing. So, any reported economics of single well pairs could, in my opinion, be improved by up to 40% when tens of well pairs are executed on a single pad.

This is the big frac’ing dividend.

Vikram Rao

June 29, 2025

*And no one dared disturb the sound of silence, from The Sound of Silence, performed by Simon and Garfunkel, 1965, written by Paul Simon.

Wildfires: Pain Now, Even More Later

March 16, 2025 § 1 Comment

A March 14 story in the New York Times describes research efforts to quantify health outcomes from the Altadena fire. Once you get past the “toxic soup” sensationalism in the title, the content is good in identifying the long-term killer: ultrafine particles which can enter cells, cross the blood brain boundary, thus causing ailments ranging from cancer to dementia. The near-term privation wrought by fires, especially ones such as this one, close to urban areas, is overt. The long-term effects tend to get discounted, much the same as long term profits in business. But they are real, and worse for fires proximal to populated areas. Here’s why.

Forest fires usually originate and burn in remote areas. Direct impact is usually on small communities. They are evacuated and the burn is contained as well as possible. However, when they do occur close to major metropolitan areas, a somewhat different set of rules appear to apply. The priority is the protection of people and property. While this may appear to be a truism, it can lead to unintended consequences. Fierce burns are subdued to smolders and attention is shifted to the next fierce burn. This certainly achieves the priority purpose and may be the only practical way to achieve it, given limited resources.

However, in terms of effects on human health, all fires are not created equal. A fierce fire is one where oxygen is available for a complete burn of the flammable material. This principle applies to the burner on your stove as well. A complete burn is desirable on your stove to give maximal heat and to minimize unburnt hydrocarbons (you should shoot for a blue flame, not yellow). Same for forest fires. Parts of the tree, especially the bark, has aromatic (organic) molecules. An incomplete burn leads to the organic molecules to be released into the air. This matters because these molecules are directly injurious to human health. There is another, somewhat more insidious, negative. These molecules coat the carbon particles (soot) emitted, making them much more toxic.

Forest Fire, painting, courtesy Falguni Gokhale,

The importance of being small (with apologies to Oscar Wilde). Finally, soot particles are more toxic when they are small. Particles below about 100 nanometers (nm) are classified as nanoparticles. In public health circles these are known as ultrafine particles. Whereas larger particles impair respiratory pathways, ultrafine particles go a step further. They can enter cells and are transported over the body. They are known to cross the blood brain barrier and are strongly implicated in exacerbating dementia.

As if this were not bad enough, ultrafine particles are particularly prone to deposition of organic molecules on their surface. This is because they have a large surface area for their mass, and that ratio is known to make them more reactive, a property that is used beneficially in chemical engineering. Here, it is all bad. Toxic organic molecules are now attached to particles which readily enter cells. Game, set and heading to match.

Now to another negative attached to a smoldering burn as opposed to a fierce one. A smolder leads to a relatively high proportion of ultrafine particles when compared to a complete burn. A study of the Getty Fire in 2019 demonstrated this effect. This too was a fire near dense housing, a couple of miles from UCLA and very close to the Getty Museum. The study found that the median particle diameter of the particles was 130 nm in the flaming state and dropped to 40 nm in the smoldering state. Potentially confounding variables were eliminated in arriving at this conclusion.

The inescapable inference is that smoldering fires are a greater health risk than flaming ones because they create ultrafine particles and emit volatile organic compounds which preferentially attached to the ultrafine particles. Admittedly, the priority on dousing the flames is well placed, but more attention ought to be paid to putting out the smolders.

The Getty Fire, and several other large ones in California, were started by tree limbs falling on power lines, sparking them and igniting the underbrush. This brings into focus the fact that high voltage power lines are bare, devoid of insulation. No practical solutions exist to insulate the lines. Underground lines are found in communities but are not feasible across forests. Chalk one up for distributed power.

Toxicity of fire emissions is also a function of the type of wood. Lung toxicity studies demonstrated that eucalyptus was by far the worst, followed by red oak and pine. This is bad news for Australians, where the species is native. But California has it in abundance because it was imported as a construction wood for its characteristics of fast growth and drought tolerance. The use in construction did not pan out (brought the wrong variety) but eucalyptus loved the west coast setting and is now considered an invasive species and highly prevalent.

One final point regarding ultrafine particulate emissions from wildfires. These can, and do, travel across the continent. The smaller the particles, the further they will travel. Ergo, the most toxic particles impact much of the nation, not just the areas close to the fires.

And the pain in the form of disease will be felt long after the fires are a distant memory *.

Vikram Rao

March 16, 2025

*All my troubles seemed so far away, now it looks as though they’re here to stay, from Yesterday, performed by The Beatles (1965), written by John Lennon and Paul McCartney.

Are Cows Getting a Free Pass on Methane Emissions?

January 20, 2025 § Leave a comment

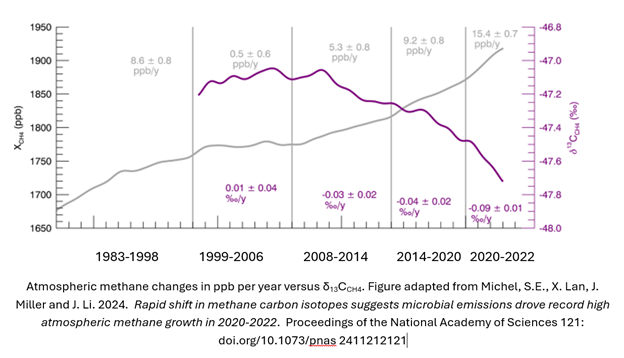

A recent study reported in the Proceedings of the National Academy of Sciences1 convincingly shows that atmospheric methane increases in the last 15 years can be attributed primarily to microbial sources. These comprise ruminants (cows in the main), landfills and wetlands. Yet, policy action on methane curbing has largely been focused on leakage in the natural gas infrastructure. In the US as well as in Canada, policies have fallen short of comprehensive action in the agricultural sector2,3.

Before we discuss the study, first some (hopefully not too nerdy) basics. Methane has the chemical formula CH4. The common variety designated 12CH4, has 6 protons and 6 neutrons in the nucleus. An isotope, 13CH4, has an additional neutron. The 13CH4 to 12CH4 ratio is used to detect the origin of the CH4. The actual ratio is compared to that of a marine carbonate, an established standard. The comparison is expressed as δ13CCH4 with units ‰ and is always a negative number because all known species have a lower figure than that of the standard carbonate. Microbially sourced methane will have ratios of approximately -90 ‰ to -55 ‰ and methane from natural gas will be in the range -55 ‰ to -35 ‰. This is the measure used to deduce the source of methane in the atmosphere.

Here is the lightly adapted main figure from the cited study. The data are primarily from The National Oceanic and Atmospheric Administration’s Global Monitoring Laboratory, but as shown in the paper, similar results have been observed from other international sources. The gray line is the atmospheric methane, shown as increasing steadily over decades, but with steeper slopes in the near years. The steeper portion is roughly consistent with the period in which the isotopic ratio becomes increasingly negative. This implies more negative contribution, which in turn means that the main contributory species is microbial. Note also the increased severity of the trend in 2020-2022, and coincidentally or not, increased methane in atmosphere slope in those years. The paper authors do not see the correlation as coincidental. They emphatically state: our model still suggests the post-2020 CH4 growth is almost entirely driven by increased microbial emissions.

A quick segue into why methane matters. The global warming potential of methane is 84 times that of CO2 when measured over 20 years, and 28 times when measured over 100 years. Climatologists generally prefer to use the 100-year figure (and I used to as well), but urgency of action dictates that the 20-year figure be used. The reason for the difference is that methane breaks down gradually to CO2 and water, so it is more potent in the early years.

These research findings point to the need for policy to urgently address microbial methane production. This does not mean that we let up on preventing natural gas leakage, the means to do which are well understood. The costs are also well known and, in many cases, simply better practice achieves the result. In fact, the current shift to microbial methane being a relatively larger component could well be in response to actions being taken today to limit the other source. But it does mean that federal actions must target microbial sources more overtly than in the past. We will touch on a few of the areas and what may be done.

Landfill gas can be captured and treated. In the US, natural gas prices may be too low to profitably clean landfill methane sufficiently to be put on a pipeline. Part of the problem is that, due to impurities such as CO2, landfill methane has relatively low calorific value, almost always well short of the 1 million BTU per thousand cubic feet standard for pipelines. However, technologies such as that of M2X can “reform” this gas to synthesis gas, and thence to methanol, and a small amount of CO2 is even tolerated (Disclosure: I advise M2X).

Methane from ruminants (animals with four-compartment stomachs tailored to digest grassy materials) is a more difficult problem. Capture would be operationally difficult. The approach being followed by some is to add an ingredient to the feed to minimize methane production. Hoofprint Biome, a spinout from North Carolina State University, introduces a yeast probiotic to carry enzymes into the rumen to modify the microbial breakdown of the cellulose with minimal methane production. I would expect this more efficient animal to be healthier and more productive (milk or meat). Nailing down of these economic benefits could be key to scaling, especially for dairies, which are challenged to be profitable. Net-zero dairies could be in our future.

Early-stage technologies already exist to capture methane from the excrement from farm animals such as pigs. These too could take approaches similar to those proposed for landfill gas, although the chemistry would be somewhat different. Several startups are targeting hydrogen production from pyrolysis of methane to hydrogen and carbon. The latter has potentially significant value as carbon black, for various applications such as filler in tires, and biochar as an agricultural supplement. If the methane is from a source such as this, the hydrogen would be considered green in some jurisdictions.

The federal government ought to make it a priority to accelerate scaling of technologies that prevent release of microbial methane into the atmosphere. With early assists, many approaches ought to be profitable. Then it would be a bipartisan play*.

Vikram Rao

*Come together, right now, from Come Together, by The Beatles, 1969, written by Lennon-McCartney

1 Michel, S.E., X. Lan, J. Miller and J. Li. 2024. Rapid shift in methane carbon isotopes suggests microbial emissions drove record high atmospheric methane growth in 2020-2022. Proceedings of the National Academy of Sciences 121: doi.org/10.1073/pnas 2411212121

2 Patricia Fisher https://fordschool.umich.edu/sites/default/files/2022-04/NACP_Fisher_final.pdf

3 Ben Lilliston 2022 https://www.iatp.org/meeting-methane-pledge-us-can-do-more-agriculture

The Bulls Are Running in Natural Gas

January 2, 2025 § Leave a comment

Ukraine shut down the natural gas pipeline from Russia to southern Europe yesterday. While not unexpected, yet another red rag for natural gas bulls. And ascendency for liquefied natural gas (LNG) futures and associated increase in US influence on Europe because, according to the Energy Information Administration, most of new LNG supply in the world will be from the US. Of course, that means more fodder for the debate in the US on whether LNG exports will increase domestic prices more than mere price elasticity with demand. I see greater demand impact from a different source, but more on that below.

Natural gas usage will not be in decline anytime soon. In fact, usage will steadily increase for the next couple of decades. Much of the incremental usage will be for electricity production, with a business model twist: expect a trend to captive production “behind the meter”. Not having to deal with utilities will speed introduction. Of course, the entire production will have guaranteed offtake, but that will not be much of a hurdle for some of the deep pocketed applications owners.

So, what has changed? Why are fossil fuels not in decline in preference to carbon-free alternatives? Much of the answer is that all fossil fuels are not created equal. Ironically, oil and gas are created by precisely the same mechanism, but their usage and the associated emissions are horses of different colors. At some risk of oversimplification, oil is mostly about transportation and natural gas is mostly about electricity and space heating.

At a first cut, oil usage will reduce when carbon-free transport fuel alternatives take a hold. Think electric vehicles, methanol powered boats, biofuels for aviation and so forth. Similarly, natural gas usage reduction relies upon rate of growth of carbon-free electricity (note my use of carbon-free instead of renewable), which today is almost all solar and wind based. Advanced geothermal is nascent and nuclear is static except for some rumblings among small modular reactors (SMRs).

In the case of oil in transportation, when the switch to battery or hydrogen power arrives, oil will be fully displaced from that vehicle. Not counting hybrids in this discussion, nor lubricating oils. In electricity, however, solar and wind power being intermittent, some other means are needed to fill the gaps. Those means are dominantly natural gas powered today for longer duration (greater than 10 hours). For short durations, and diurnal variations, batteries get the job done for around 2 US cents per kWh. Very affordable, and unlikely to change. To underline the point, solar and wind need natural gas for continues supply. Until alternatives such as long duration storage, geothermal or SMRs make their presence felt, every installation of solar or wind increases natural gas usage.

As if that were not bad enough, a recent complication is increasing electricity demand. Artificial Intelligence, or AI, and to a greater extent the Generative AI variant, has increased electricity demand dramatically. For example, a search query uses 10 times the energy when employing Gen AI, compared to a similar conventional search. The information is presumably more useful, but the mere fact is that these searches and other applications such as in language, are power hogs. The data center folks are trolling for power in geothermal and nuclear. Microsoft went so far as to commission the de-mothballing of the 3 Mile Island conventional nuclear facility. Control room picture shows its age. The operators will face what an F18 pilot would, if asked to fly an F14 (apologies, just saw Top Gun Maverick film).

Almost all the big cloud folks want to use carbon-free power, 24/7/365. Good luck getting that from a utility. Some, such as Google with Fervo Energy geothermal, are enabling supply to the grid and capturing the credit. Others are going “behind the meter”, meaning captive supply not intended for the grid. The menu is geothermal, SMRs and innovative storage systems. All have extended times to get to scale. Is there an option that is more scalable sooner to suit the growth pattern of AI?

Natural gas. A combined cycle plant (electricity both from a gas turbine and from a later in cycle steam turbine) could be constructed in less than 18 months. Carbon capture is feasible, even though the lower CO2 concentrations of 3 to 5% (as compared to 12 to 15% for coal plants) makes it costlier (a reason I am bearish on direct air capture, with 0.04% concentration). At the current state of technology, I estimate that will add 3 to 4 cents per kWh. This technology will keep improving, but that number is already worth the price of admission, at least in the US, where the base natural gas price is low. Not renewable, but nor is nuclear. You see why I prefer the carbon-free language?

To be behind the meter, the plant would need to be proximal to the data center. Data centers prefer cool weather siting for ambient heat discharge. Reduces power usage. Since natural gas pipelines serve a wide area, this ought not to be a major constraint. However, it could favor producers in the northern latitudes, especially if a rich deposit is currently unconnected to a major pipeline. Favorable deals could be struck especially with long term offtake contracts in part because the gas operator will eliminate the markup by the midstream operator. These conditions could be met in Wyoming, Alberta (Canada) and, of course, Alaska.

We used to refer to natural gas as a bridge to renewables, until that phraseology fell out of vogue. The thinking was that gas could replace coal to provide some CO2 emissions relief (and it did that for the US), and eventually be replaced by renewable energy. The model suggested above does not fit that definition. Those plants will likely not be replaced because they would be essentially carbon neutral. And they would enable a powerful new technology the foundations of which already have been awarded the 2024 Nobel Prize in Physics. Gen AI may well lose some of its luster, but the machine learning underpinnings will survive and continue to deliver. All that will need data crunching. More data centers are firmly in our future.

Those that are still inclined to teeth gnashing on emissions from natural gas production ought to ponder nuclear spent fuel disposal, mining for silica for solar panels and the problem with disposal of disused wind sails, to name just a few. Every form of energy has warts*. We simply need to minimize them.

Vikram Rao

January 2, 2025

*Every rose has its thorn, from Every Rose Has Its Thorn, 1988, performed by Poison, written by Brett Michaels et al.